Where intelligence is permitted to act — and required to stop.

The purpose of AI and automation is not to remove the human — but to return them to the center.

Authority & Control

Integrity & Continuity

Operational Enforcement

Understand CortexForge

Related Divisions

Core Division

Operational Enforcement

Anonymization & Summarization — Executed within Incident Nexus

AI operating within governed architecture.

Anonymization and summarization executed under human authority.

Hard Stops

Automation Termination Boundaries

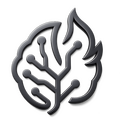

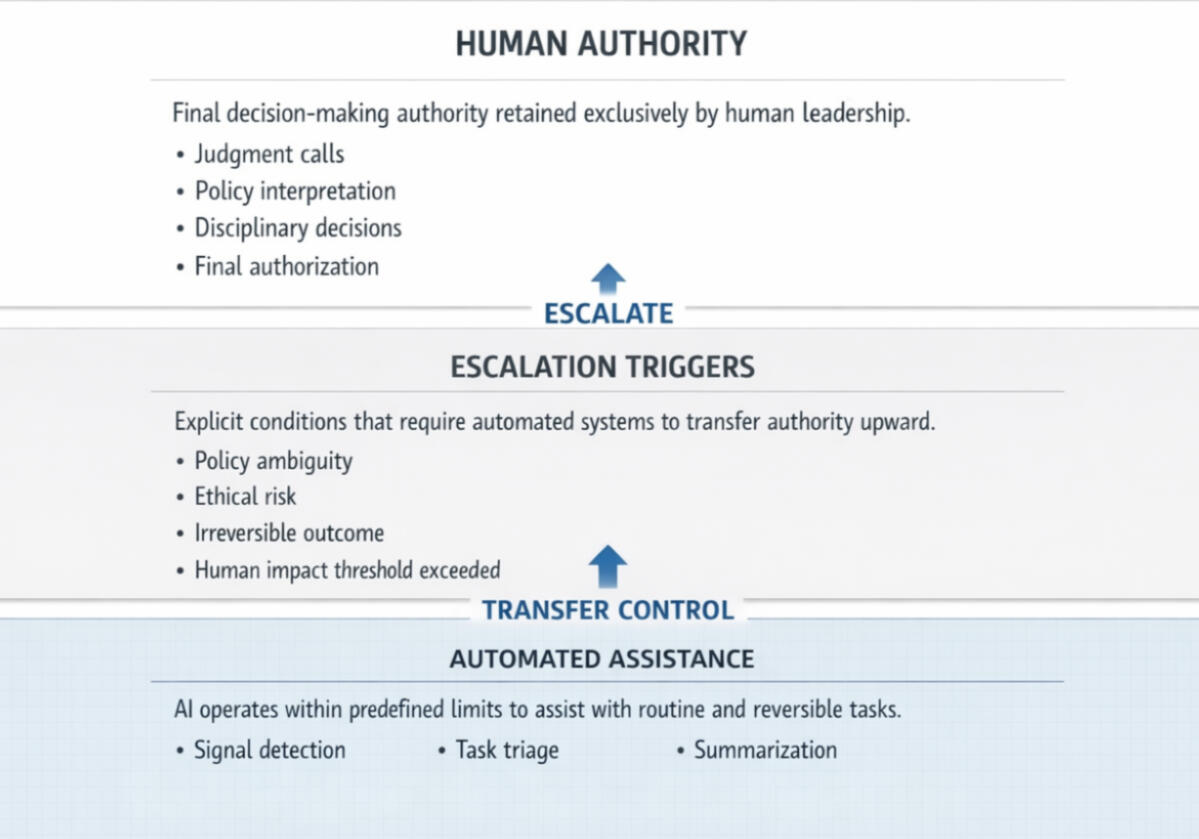

What This GovernsAutomation Termination Boundaries establish the hard stop lines for intelligent systems.They define:

- Which actions automation is explicitly forbidden from taking

- The precise conditions under which autonomous execution must halt immediately

- The domains where confidence, optimization, or pattern recognition are irrelevant

- The architectural separation between assistance and authority

- These boundaries are enforced by system design — not policy, preference, or discretionWhy This ExistsInstitutions are not afraid of AI doing too little.

They are afraid of it doing one thing too much.Most failures in intelligent systems do not occur because automation was inaccurate — they occur because it was unchecked. When stopping conditions are vague, authority becomes blurred. When authority is blurred, accountability collapses.This section exists to remove ambiguity entirely.Automation Termination Boundaries prove that autonomy is conditional, limited, and revocable by design. They demonstrate that restraint is not an afterthought — it is foundational.What This Guarantees- Automation cannot proceed beyond defined limits, regardless of confidence

- No autonomous action can cross into protected human judgment domains

- All escalation beyond this boundary requires explicit human authorityStructural Principle:

Termination is not failure.

Termination is governance.

SACRED DECISIONS

Protected Human Judgments

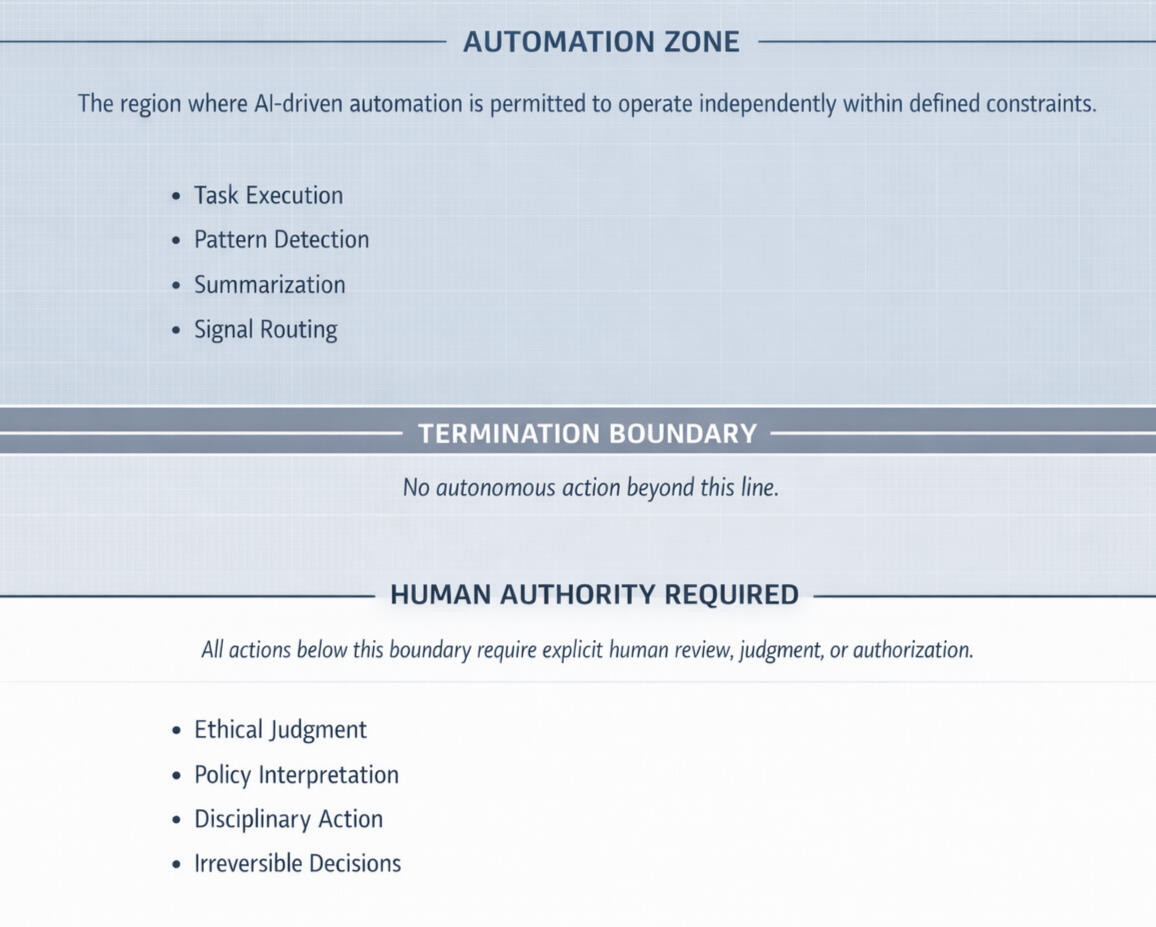

What This GovernsProtected Human Judgments establish human-only decision domains within an intelligent system. These are areas where automation may support, but is never permitted to decide.This includes decisions that require:

• moral reasoning

• contextual discretion

• empathy and situational awareness

• accountable human authorityExamples of protected domains include:

• disciplinary determinations

• care and well-being decisions

• context-sensitive leadership judgment

• ethical interpretations of policy

• outcomes with irreversible human impactIn these domains, intelligence may prepare information — but authority never transfers.Why It ExistsOptimization without restraint erodes trust.

Many systems fail not because they are inaccurate, but because they attempt to flatten human complexity into metrics. When judgment is reduced to confidence scores or efficiency thresholds, people feel surveilled, dehumanized, or overridden.This section exists to make a different claim:

Some decisions are protected because they are human.

Not despite it.Protected Human Judgments ensure that technology never replaces the very reasoning it is meant to support.This is trauma-informed design at a structural level.What This Guarantees• No disciplinary, ethical, or care-related outcome is ever decided by automation

• Human discretion cannot be bypassed by confidence, urgency, or pattern recognition

• Efficiency never outranks dignityStructural Principle:

If a decision defines a human outcome, it cannot be finalized by a machine.

Clear Handoffs

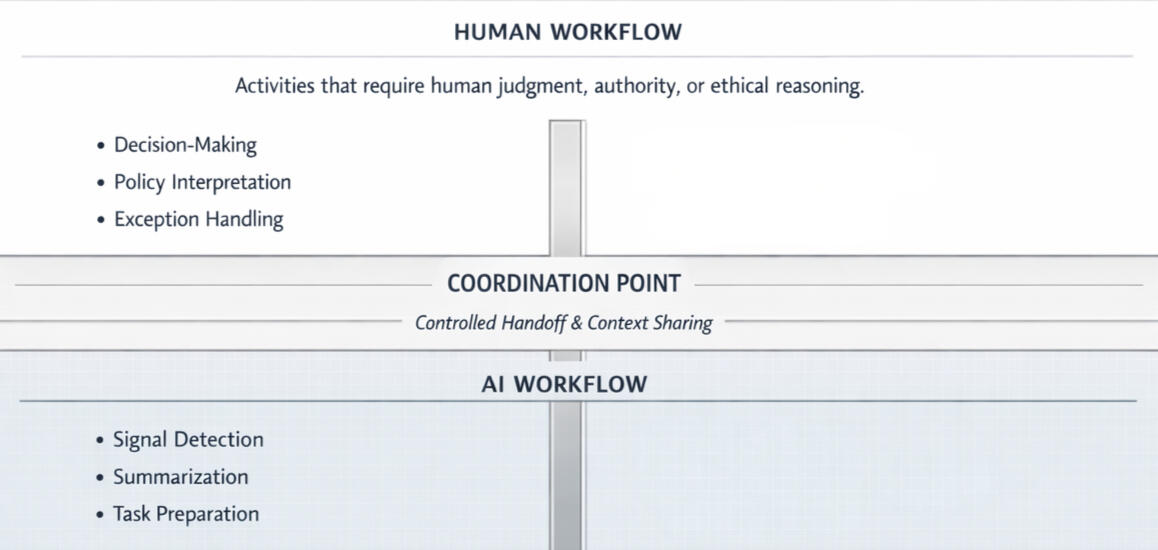

Human–AI Coordination Rules

What This GovernsHuman–AI Coordination Rules establish explicit interaction contracts between people and intelligent systems.They define:

• Who acts first

• Who verifies

• Who escalates

• Who owns the final outcomeIt does not ask what the system can do — it defines how responsibility is shared without collision.Why This ExistsMost system failures do not come from bad intelligence.

They come from unclear coordination.When humans and systems operate in parallel without rules:

• Humans assume the system handled it

• Systems assume a human will intervene

• Responsibility disappears into the gap

This is how silent failures are born.What This Guarantees• Humans never fight the system

• The system never surprises humans

• Responsibility never falls between roles

• Accountability is always traceableStructural Principle:

Responsibility cannot be shared at the point of execution.

Governed Insight

Governed Intelligence Boundaries

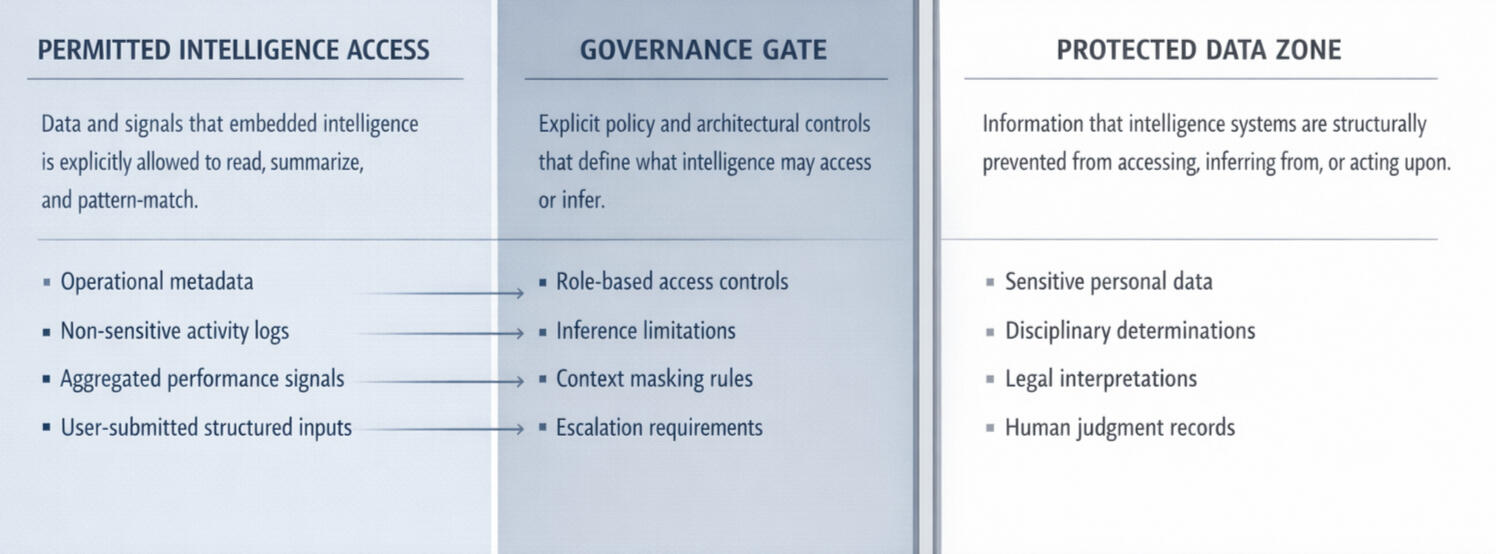

What This GovernsGoverned Intelligence Boundaries establish explicit architectural limits on intelligence behavior.They define:

• Which data domains intelligence layers may access

• What types of inference are permitted or prohibited

• Which datasets may never be cross-referenced

• How visibility, inference, and action are separated by designThis is not a policy document.

These boundaries are enforced at the system architecture level.

Intelligence does not “request” access.

It is either structurally allowed — or it cannot see the data at all.Why This ExistsMost intelligence systems fail governance audits without malicious intent.

The failure mode is almost always the same: unchecked inference.This section exists to ensure:

• Insight never outruns authorization

• Correlation does not become surveillance

• Capability does not silently expand over time

Governance must be architectural, not reactive.What This Guarantees• compliant by construction

• auditable by design

• trusted by leadership

• defensible under regulatory scrutinyStructural Principle:

Capability does not imply permission.

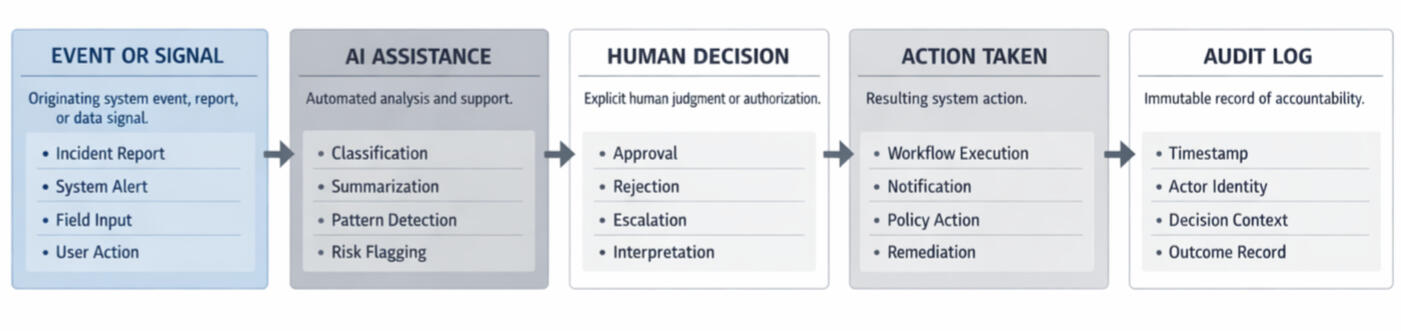

Nothing Untraceable

AUDITABILITY & ACCOUNTABILITY CHAINS

What This GovernsAuditability & Accountability Chains govern:

• How actions are initiated, reviewed, approved, modified, or stopped

• How AI recommendations are recorded alongside human decisions

• How responsibility is preserved across automated and human workflows

• How outcomes remain explainable long after executionThis applies to every system action, including:

• automated classifications

• summaries and recommendations

• escalations and approvals

• workflow executions

• policy actions and remediationsNothing occurs outside the chain.Why This ExistsInstitutions do not lose trust because mistakes happen.

They lose trust because no one can explain what happened.Most automation failures are not technical failures — they are memory failures:

• decisions without authors

• actions without context

• systems that cannot answer “why”This section exists to eliminate that failure mode entirely.

Not by policy.

By architecture.What This Guarantees• No anonymous actions occur

• No invisible decisions exist

• No authority is implied

• No automation escapes reviewStructural Principle:

If an action cannot be explained, it cannot occur.

Architectural Constraint

Intent Preservation

What This GovernsThis section governs how the original purpose of a system is preserved as intelligence, automation, and optimization scale.

It defines:

• How founding intent is explicitly encoded into system architecture

• Where intent is bound to success metrics, not inferred from outcomes

• How optimization pathways are constrained by mandate

• How deviation from purpose is detected, flagged, and halted

• How future modifications inherit limits, not just capabilityIntent is treated as an operational constraint, not a mission statement.Why This ExistsMost ethical breakdowns do not come from bad intent.

They come from unconstrained optimization.

As systems improve, they naturally:

• prioritize measurable outputs over original purpose

• reward efficiency even when it undermines meaning

• redefine “success” based on what is easiest to optimizeWhen intent is undocumented, systems drift quietly.

When intent is unenforced, drift becomes normalized.

This section exists to prevent mandate erosion — the moment where a system is still functioning correctly, but no longer functioning as intended.How Intent Is Preserved (Structurally)Intent is enforced through design coupling, not review.

Specifically:

• Every system capability is linked to a declared purpose domain

• Optimization metrics are scoped to that domain only

• New automation paths cannot be added without inheriting the original intent constraints

• If system outputs begin optimizing for adjacent or conflicting goals, execution halts

• Ambiguous optimization triggers escalation, not autonomyThe system does not ask what it could do.

It is restricted to why it was built.What This GuaranteesWith Intent Preservation enforced:

• Systems cannot silently redefine success

• Optimization cannot expand beyond mandate

• Capability growth does not imply purpose expansion

• Drift is surfaced before it becomes institutional

• Humans retain authority over why the system existsAccuracy does not grant permission.

Purpose does.

Ethical Load Management

Cognitive Load

What This GovernsThis section governs how much mental burden an intelligent system is allowed to impose on humans.

It defines:

• Alert thresholds

• Escalation frequency

• Review volume

• Decision density

• Interruption toleranceAttention is treated as a finite ethical resource.Why This ExistsMost harm caused by intelligent systems is not acute.

It is exhaustive.

Systems fail ethically when they:

• surface too much information

• demand constant review

• normalize interruption

• shift cognitive labor instead of removing itTired humans do not make better decisions.

They make defensive ones.

This section exists to prevent systems from externalizing their complexity onto people.How Cognitive Load Is Limited (Structurally)Cognitive safety is enforced through burden ceilings:

• Alerts are rate-limited by role, not volume

• Escalations batch rather than interrupt

• Non-critical signals are suppressed by default

• Systems must justify attention requests

• Silence is a valid and preferred stateIf a system increases cognitive demand:

• it must remove an equivalent burden elsewhere

• or escalation is deniedNo system may grow by exhausting its operators.What This GuaranteesWith Cognitive Safeguards enforced:

• Humans are not overwhelmed by intelligence

• Decision quality is preserved under stress

• Automation reduces mental load instead of redistributing it

• Attention remains protected

• Burnout is treated as a system failure, not a personal oneIf the system makes people tired, it is malfunctioning.

Fail Safe

Failure Containment & Graceful Degradation

What This GovernsThis section governs how systems behave when they are wrong, uncertain, degraded, or misused.

It defines:

• How confidence is withdrawn

• How scope collapses safely

• How humans are notified

• How recovery paths are surfaced

• How execution is paused without chaosFailure is assumed.

Damage is not.Why This ExistsEthics are not tested during success.

They are tested during failure.

Most systems fail ethically when:

• uncertainty is hidden

• confidence is overstated

• humans are left unsupported

• systems continue “just in case”This section exists to ensure that intelligence never abandons people during breakdowns.How Failure Is Contained (Structurally)Failure containment is enforced through graceful degradation:

• Confidence thresholds retract authority automatically

• Capabilities reduce rather than persist

• Automation narrows instead of escalating

• Humans are informed before impact

• Manual control is always recoverable

If the system cannot operate safely, it steps aside.What This GuaranteesWith Failure Containment enforced:

• Systems do not pretend certainty

• Errors do not cascade silently

• Humans are never surprised

• Recovery is explicit

• Trust survives breakdownsA system that fails loudly but clearly is ethical.

A system that fails silently is not.

Owned Outcomes

Moral Ownership & Decision Traceability

What This GovernsThis section governs who owns outcomes — not just actions — within intelligent systems.

It defines:

• How responsibility is bound to decisions

• Where ownership cannot be delegated

• How “recommendation vs decision” is preserved

• How outcomes remain attributable over time

• How “the system decided” is structurally impossibleExecution may automate.

Ownership may not.Why This ExistsOrganizations do not lose trust because things go wrong.

They lose trust because no one can say who was responsible.

Automation often obscures authorship by:

• fragmenting decisions

• distributing actions

• diffusing accountabilityThis section exists to ensure that responsibility never evaporates into the system.How Ownership Is Enforced (Structurally)Ownership is enforced through binding requirements:

• Every outcome is linked to a human authority

• Execution never occurs without an accountable owner

• Responsibility persists after execution

• History survives turnover and timeIf no one can own the outcome, it cannot occur.What This GuaranteesWith Outcome Ownership enforced:

• No action lacks an owner

• No decision hides behind automation

• Accountability remains legible

• Authority is preserved

• Ethics remain enforceableAutomation may act.

Humans remain responsible.